OMSDC’s curated networking platform started as a frustration, survived a health scare, and became a case study in how mission-driven organizations can build tools that serve their members and sustain their work.

Jamie Van Doren was supposed to help organize and run DealMaker at ConnectingOHIO 2025. He had a plan. It was analog, but it was a start.

On April 30, he had a heart attack. More than one, actually. On May 11, he went under for a triple bypass.

He came back in late June, just in time to help support the event. And what he saw was a team working incredibly hard to do something that shouldn’t have been so difficult. Every curated meeting between a corporate member and an MBE supplier ran through emails, spreadsheets, and the institutional knowledge of one person trying to match 400-plus MBEs to specific corporate needs. The follow-up alone was a full-time job. The matching was well-intentioned but limited by what any single person can hold in their head.

“This isn’t scalable,” Van Doren remembers thinking. “And it isn’t just an efficiency problem. It’s a mission problem. If we can’t connect the right people reliably, we’re leaving our most important value on the table.”

From Pitch to Product

By January 2026, Van Doren had an idea he wanted to test. OMSDC’s Annual Meeting was coming up in March. Open networking is a staple of events like it, but open networking leaves outcomes to chance. The people who need to meet each other often don’t. The introverts hang back. The time runs out. The most valuable conversations happen accidentally or not at all.

Van Doren had experienced a better model years earlier. While fundraising for his first tech startup in 2020 and 2021, he’d used a curated virtual meeting platform that scheduled one-on-one sessions with venture capital firms. The structure worked. Every meeting was intentional. Every slot was used.

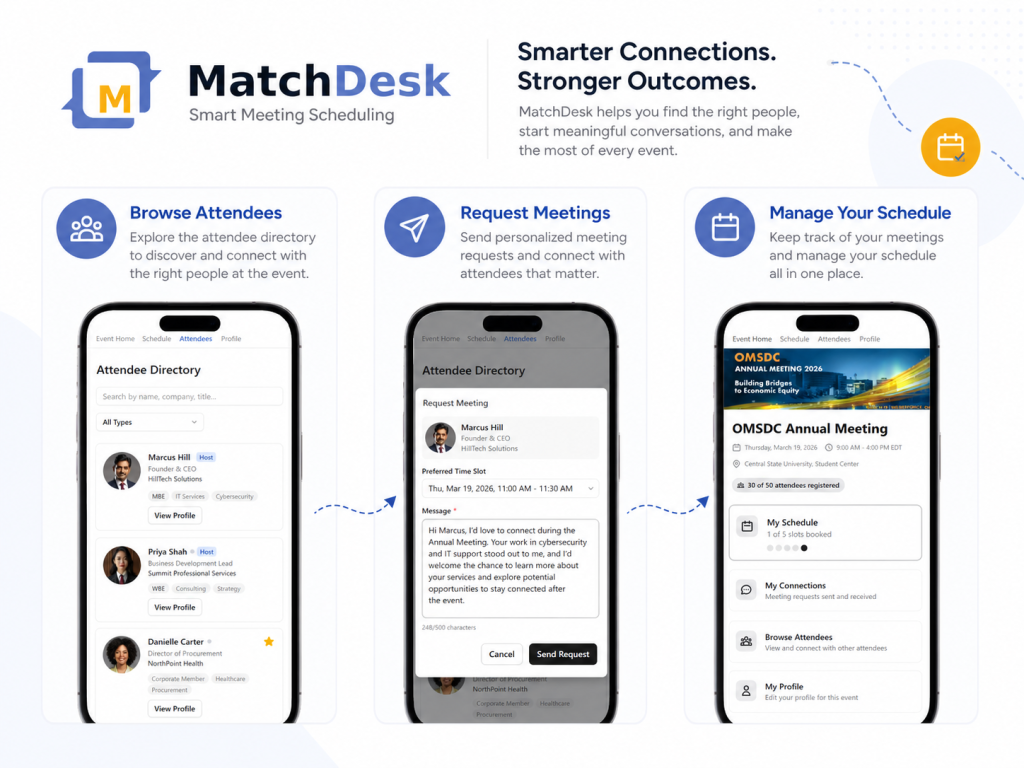

He pitched the concept to OMSDC’s leadership: a curated one-on-one networking platform for the Annual Meeting. Attendees would browse a directory, request meetings with specific people, and both parties would opt in before anything was scheduled. The system would then generate a conflict-free schedule automatically. No spreadsheets. No email chains. No collisions.

The team decided to trust him. George Simms, OMSDC’s President and CEO, saw something beyond a scheduling tool. “This is what supplier inclusion looks like when it’s hardwired into the way we operate,” Simms says. “We talk about connecting minority businesses to opportunity. This is infrastructure that makes that connection structured and repeatable, not something that depends on who happens to be standing next to whom at a reception.”

Simms also saw a broader signal. Van Doren is a Latino tech founder building enterprise software with AI tools, inside a minority business support organization. “That’s the kind of innovation and excellence we exist to spotlight,” Simms says. “It’s one thing to advocate for minority-owned businesses. It’s another to have one of our own people build the tool that solves the problem.”

Building It: AI as Developer, Not Magic Wand

Van Doren looked into off-the-shelf solutions first. The pricing was a non-starter. Competitors charge $3,000 to $10,000 or more per event. For a nonprofit running multiple events a year, those numbers don’t work. And Van Doren wasn’t convinced the features would match what OMSDC actually needed.

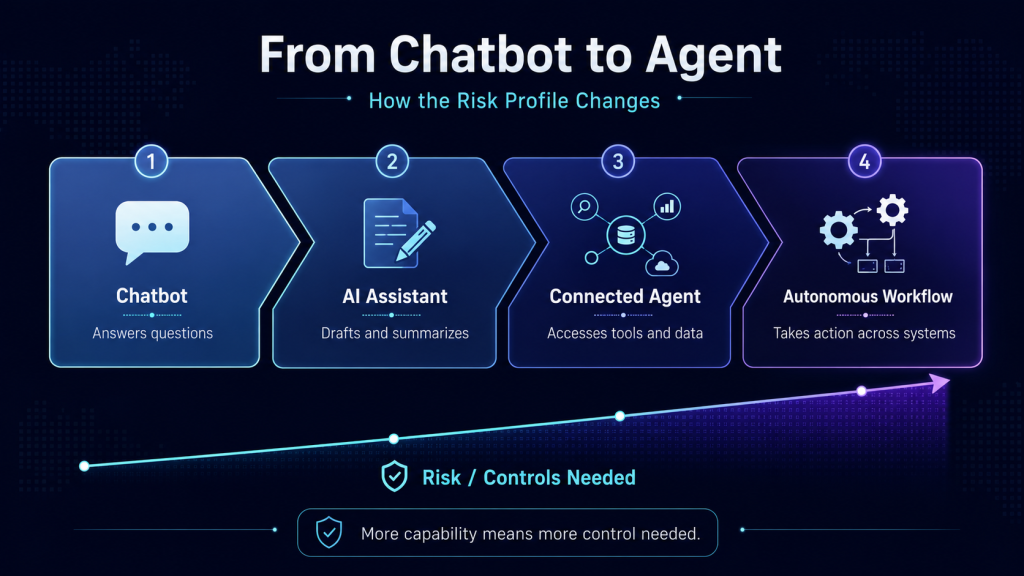

So he decided to build it himself. Not from scratch in the traditional sense, but not with a wave-of-the-hand prompt either. Van Doren has built two tech companies, led developer teams, and co-founded a company in 2020 called NeverEnding. They were building a custom AI model for animation, before the large language model wave hit the mainstream. He knew how to put together a product requirements document and a technical roadmap. The difference this time was the developer: Claude Code.

“Using AI to code isn’t like how they advertise it,” Van Doren says. “Not if you want something stable, scalable, and enterprise-ready. You can’t just say ‘build this app.’ Our platform needed real security, complex scheduling logic, multi-tenant architecture, role-based access. So we built it the way you’d build any serious software product: with a PRD, slice by slice, with multiple rounds of testing and deployment.”

Even with AI as the developer, the build was intense. Two months of ten- to twelve-hour days, seven days a week, reviewing and testing code daily. That’s not the effortless “just prompt it” story that AI marketing likes to tell. But it’s dramatically faster than the six to eight months the same product would take a team of two or three developers plus a project lead. The difference isn’t that AI eliminates the work. It’s that it compresses a team’s worth of output into one person’s timeline, as long as that person knows how to structure and lead a build.

James Price, OMSDC’s Associate Vice President of Operations, sees MatchDesk as evidence of OMSDC walking the walk. “We tell our MBEs they need to be looking at where AI fits in their organizations and how they can leverage it to compete,” said Price. “Creating MatchDesk is a perfect example of us not just telling, but showing.”

Here’s what it looks like in practice. MatchDesk is a 1:1 meeting scheduling platform designed for B2B events. Attendees browse a branded directory, send meeting requests with a personal message, and both sides confirm before anything is booked. The system then generates a conflict-free schedule for every participant. Organizers get real-time analytics on enrollment, meeting rates, and engagement.

Critically, Van Doren didn’t build MatchDesk just for OMSDC. It’s a multi-tenant system, meaning any organization — chambers of commerce, councils, accelerators, trade associations — can create an account, brand it to their identity, and run structured networking events through the platform. Pricing starts with a free tier and scales to $499 per year for enterprise use, a fraction of what incumbents charge per single event.

The First Deployment: Honest Lessons

MatchDesk launched at OMSDC’s 2026 Annual Meeting at Central State University. Signups were strong. But the event ran behind schedule, and as a result, fewer live meetings happened than were scheduled. The gap between scheduled and completed meetings surfaced a real operational lesson: structured matchmaking only works if the event itself protects the time it needs.

“It actually elevated something important for us,” Van Doren says. “We were packing too much in. The tool did what it was supposed to do. But we learned that if we want curated meetings to deliver, we have to give them room to breathe on the agenda.”

Price sees the tool as a way to shift where his team spends its energy. “Before MatchDesk, facilitating introductions between corporate members and MBEs meant a lot of manual coordination. Emails, spreadsheets, follow-ups. It worked, but it consumed time that could have gone toward building deeper relationships and solving real problems for our members,” Price says. “What excites me is getting out of the administration business and into the relationship business. I want our team focused on connecting people and creating value, not managing spreadsheets.”

The Bigger Thesis: Why Nonprofits Need to Build

Behind MatchDesk is a larger argument about how mission-driven organizations sustain themselves.

Most nonprofits operate at the mercy of a few revenue streams: donations, sponsorships, membership dues, and grants. One or two bad years can be devastating. And even in good years, there’s often not enough funding to do the work the organization knows needs to be done. For many non-profits, the mission is clear. The resource opportunities to fulfill it are not.

Van Doren believes nonprofits have an underexplored path: building products and services that create genuine member benefit while also generating revenue. Not merchandise. Not another gala tier. Real tools that solve real problems for the people the organization serves.

“Nonprofits are mission-driven,” Van Doren says. “That’s actually an advantage when it comes to product development. You’re building for the people you serve every day. You understand their problems because you live inside them. And because you’re not answering to shareholders, you’re less likely to make the kind of short-term decisions that erode trust.”

MatchDesk is one version of what that looks like. It started as a solution to OMSDC’s own operational problem. It’s now a product that any similar organization can use. The revenue it generates supports the mission it was built to serve.

“We talk a lot about how MBEs need to diversify revenue and build resilience,” Van Doren says. “The organizations that support them need to do the same thing.”

What’s Next

The MatchDesk team is now building out sponsor management and activation features, extending the platform’s value from attendee matchmaking into event monetization. The goal is to give organizations a single tool that handles the two things they struggle with most at events: making sure the right people meet, and making sure sponsors see measurable return.

For OMSDC, the next test will be DealMaker and future ConnectingOHIO events, where the combination of structured matchmaking and tighter event programming should close the gap between scheduled meetings and completed ones.

“The tool works,” Van Doren says. “Now we need to make sure everything around it works just as well.”

MatchDesk is currently accepting early-access signups at getmatchdesk.com. The first 100 organizations to sign up receive 50% off their first year.